What if silos were just for grain?

When you set out to do anything—hang a picture, bake an Anzac biscuit, write a newsletter, hack together some code—there are many base level jobs you avoid doing. This is simply because someone has done it before, openly shared their experience, or perhaps offered a product to make your life easier. Even the products offered (e.g. beehiiv) are themselves built from tools and services that make putting together a scalable newsletter company an easier experience.

The same is largely true in agronomy, with regional factsheets, variety guides, weed control tools generally available to farmers or would-be farmers. Of course, this doesn’t mean you’re a farmer overnight! Equally, me having access to ChatGPT and Claude does not make me a professional developer, however, it improves my rate of development and on a global scale, lowers the barrier to software-based innovation.

A well worn Anzac biscuit recipe from my grandmother’s 27th edition CWA Cookery Book and Household Hints. While not on this page, we have our own version of this recipe we’ve adjusted to fit our tastes.

Remember the weathered recipe book in your kitchen? It tells the story of years, decades, perhaps generations of iterative developments in cooking for your family - but built from a base recipe that was initially shared. This is exactly what open-source tech in agriculture is. It’s taking the same principles from recipe sharing, to software, hardware and data use relevant to precision ag. It’s about breaking siloes (without grain loss) and enabling better collaboration (there’s even a silo recipe from 1919).

So why is this needed in agtech? Well, we’re slowing ourselves down by rehashing the same old basic code, collecting the same data and getting caught up in boilerplate work. Developments in the broader tech field for cheaper hardware and easier code, mean open-source datasets are the biggest barrier to many vision, or text-based applications for precision ag. Check out the recent announcement of the Jetson Orin Nano Super by Jensen Huang of NVIDIA to add to their embedded computing range. The number of Trillions of Operations Per Second (TOPS) you get for your USD has increased incredibly these last 7 years.

With easier access to performant edge computing and LLMs like ChatGPT, Claude, Cursor and CoPilot helping with code—the biggest barrier to innovation is increasingly data. Flipping this on its head—the most impactful opportunity for investment by growers and industry to drive innovation is the collection and publication of open source datasets. But more on that in future issues.

With the latest release of NVIDIA’s Jetson Orin Nano Super, the TOPS/USD is rapidly improving.

Welcome

And with that, welcome to the OpenSourceAg newsletter, one that hopes to highlight and connect the recipe sharers, projects and open-source developers building tools everywhere. It started out as an idea two years ago, and after a PhD thesis, moving countries a few times and chemotherapy, it’s finally ready to launch.

Thank you for the unexpected amount of interest. With new open-source projects projects appearing in all aspects of ag-related tech (or agtech), I have been wanting to start building a place and community, to share, learn and explore new collaborations. Slightly later than I had hoped, some setbacks of late, but 2025 is the year, and this newsletter is a place to start. With over 325 subscribers, it's exciting to see how many of you from such diverse parts of the industry and globe are equally passionate about discovering more in open-source development for agriculture. Plus, I’ll be sharing some of the latest ag tech research, OpenWeedLocator updates, and longer form posts of various Bluesky/X/LinkedIn creations.

Most importantly though, I would love to make this as much of a conversation as possible. If you have any comments, ideas, questions or anything else, feel free to leave them below, reply via email or on social media and I will do my best to get back to you and share it in this newsletter. If you have your own project or know of others, let me know and I can add it to the Featured Project space below, and there is the accompanying OpenSourceAg repository with a growing list of datasets and projects to sift through.

The OpenSourceAg repository - if a dataset is missing (and there are many missing) open a pull request and I can add it to the list.

And who am I exactly? Well, I’m no chef, not really a developer, hardly a farmer (or farmer’s son) but working in precision weed control as a researcher. As a self-taught coder, starting back in 2018 with Python, I have benefited greatly from open-source libraries and people generous enough to share their experience. And I am quite certain that the machine learning field would not be where it is today if it wasn’t for openness and sharing being the norm.

So, in this newsletter we’ll cover:

Table of Contents

And don’t miss the next edition, make sure you subscribe so it lands in your inbox every second Tuesday (#AgtechTuesday). I’ll be presenting some ideas on how agtech companies can benefit from opening their own work and the nuances to the approach. How many ag companies have active and useful Github repositories? But until then, check out the rest below.

The 7 principles of open-source development

If you search for anything related to machine learning, you’ll be bombarded by a plethora of projects, guides, documentation, courses, tools—usually shared by creators openly on the internet. I learnt to code through the PyImageSearch blogs back in the day and like many students, with Andrew Ng’s Machine Learning Specialisation on Coursera. I’ve used Google’s Tensorflow, Meta’s Pytorch, Chollet’s Keras and PJ Reddie’s YOLO architecture—the list is endless. It is easy to assume that this field has always been open, and receptive to open-source sharing of tools and ideas. Yet, that isn’t the case.

In 2007, Sören Sonnenburg et al. (coauthored by the likes of Yann LeCun) published The Need for Open Source Software in Machine Learning, highlighting a clear need for an open-source approach in the ML field to capitalise on the advancements in tools and architectures being developed.

Open source tools have recently reached a level of maturity which makes them suitable for building large-scale real-world systems…However, the true potential of these methods is not used, since existing implementations are not openly shared, resulting in software with low usability, and weak interoperability.

Sound familiar? Agriculture has access to a huge array of advanced tools and complex algorithms, yet interoperability is low because of the lack of sharing. The ISOBUS standard is potentially making headway here, but a distinct lack of open tools and documentation about implementation restricts development of ISOBUS related equipment to largely companies with dedicated development teams, and substantial budgets for the costly accreditation. The sentiment has its basis in what is one of the most widely shared phrases of all time, and the origin of Google Scholar’s ‘Standing on the shoulders of giants’.

If I have seen further it is by standing on the shoulders of giants.

The authors distilled the benefits of an open approach into 7 key themes, broadly covering reusability, error checking, persistent availability and collaboration. Whilst their intent was scientific and focused on the ML field, it is clear that with 18 years of hindsight, that these identified pillars were pretty spot on.

7 Key Advantages of Open Source Development

Improved reproducibility and fairer comparison of methods

Faster error checking and bug fixes

Building on existing resources (instead of creating basic biscuit recipes from scratch)

Better access to useful tools for research and development even after changes or failures in companies

Leveraging multiple advances in related fields

Faster adoption with wider user bases

Collaboration towards better standards for interoperability

shared.image.missing_image

In my own experience, these 7 benefits have held true. For example, internet strangers have found errors in my code I have missed. The organic reach of the OWL project and the diversity of solutions built on top of the OWL system would not have been achieved if I had kept it closed.

In hyping the approach, it is important to say that the open-source field and concept is far from a rosy utopia for tech development. There are many challenges in licensing, protecting IP, managing communities and generating revenue. Although, two big open-source platforms Huggingface and Roboflow have raised significant chunks of cash of late - valuing the former in the billions and the latter raising US$40M. But, the efficiency of sharing base recipes for interoperability, security and adoption seems unparalleled, in my opinion.

Practicalities: How can you get started with image data collection, annotation and sharing?

I would love to keep parts of this newsletter as applied and practical as possible—so every edition gives you the opportunity to contribute to or benefit from an open-source project. This week it’s image data collection.

Fortunately, the International Weed Recognition Consortium (IWRC) has recently uploaded image collection protocols so you can get started. It’s as simple as taking your phone out when you’re in a field, park or driveway and taking consistent images of weeds. The full protocol I developed for the IWRC is linked below.

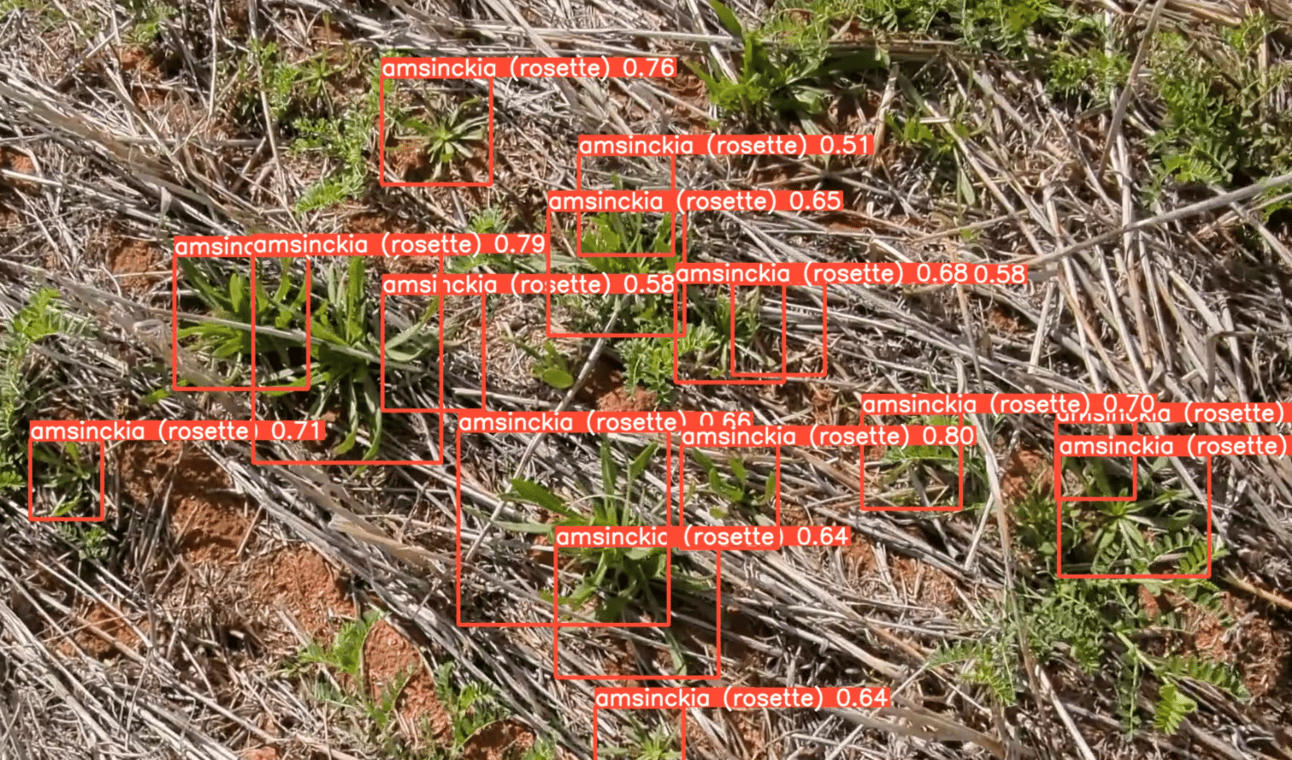

With this approach, it’s possible to collect thousands of images without the expense and complexity of specific hardware. We’ve developed annotated datasets and detection algorithms for weeds in lawns, blue lupins in narrow leaf lupins and amsinckia in chickpeas, among others. You certainly don’t need a huge field or access to farms—while going through chemotherapy, I’d spice up my daily walks by trying to image as many blue lupins as I could in the local suburban area.

Detection of amsinckia in chickpeas based on about 130 images collected from a mobile phone.’

The complete process from collection through to annotation, training and inference is expertly covered in this tutorial from Edje Electronics. And once you have that annotated dataset, make sure to upload it to WeedAI and share it with relevant ag contextual metadata. WeedAI is an open-source image sharing platform for annotated images of weeds. With over 30,000 images annotated with bounding boxes, it’s a good place to start for any project.

The WeedAI platform is a place to share annotated images of weeds, with standardised metadata collection.

Featured Project | OpenWeedLocator

The OpenWeedLocator (OWL) is an open-source project to aiming to make precision ag tools (weed detection specifically) more widely available, modifiable and low cost. It enables site-specific weed control without the barriers of proprietary hardware or expensive software licenses. As an embedded, real-time system, the OWL combines camera-based detection using the green colour of weeds for Green-on-Brown in fallow, with an actuated 12V output, using the Raspberry Pi suite of computers to target weeds directly and entirely off the shelf parts. As an open-source project, OWL is the base recipe for weed detection to open the door for farmers, researchers, and developers to collaborate, adapt, and improve on the technology.

You can find the full guide and instructions for use on the OpenWeedLocator repository.

An OWL mounted on a SwarmFarm robot for largescale image data collection.

Looking ahead, the OWL roadmap involves refining the software for compatibility with accelerators like the Hailo-8, enabling real-time inference of GoG algorithms on a Raspberry Pi. Recent tests I conducted, however, show you can get up to 12FPS on a Raspberry Pi 5 alone (without any acceleration) when running YOLOv8-N models. This work is part of a project at the University of Copenhagen where we are working on a 12m spot spraying system and all the required communication protocols and power management that goes along with it.

Prototype mounting of the first OWL unit on a sprayer at the University of Copenhagen. This system will be developed into a 12m wide platform.

The OWL was originally developed as part of my PhD and is being actively worked on, so I’ll keep sharing updates on OWL progress here. I certainly welcome any suggestions or tips on what should be done or features that may be useful.

Interesting Reads

The authors found improved weed detection performance in pastures by replacing the Green channel of RGB cameras with Depth from a RGB-D camera | Paper Link

LMMs might offload some annotation burden by generating images of weeds/fruits for us. The authors found this approach worked well in real-life orchards for fruit detection, even when only trained on LMM generated data | Paper Link

CWD30, a dataset of over 200k images of crops and weeds in various environments | Paper Link | Dataset Page

Until next time (just two weeks away), keep building and sharing. I’m looking forward to hearing your feedback and content suggestions.

Cheers,

Guy